One of the key races right now that will determine which party controls the United States Senate next year is the Colorado race between incumbent Senator Mark Udall (D) and his challenger, Representative Cory Gardner (R). Polling over the past month has shown a small but persistent lead for Gardner; he’s been ahead of Udall by one or two points, and Nate Silver gives Gardner a roughly 80 percent chance of prevailing.

These aggregated polls are a very useful tool for political prognosticators, offering by far the most reliable estimates of what the actual vote will be on Election Day. But what if they’re wrong? Not that there’s anything wrong with a given poll, but what if all the polls are systematically off in one direction or another?

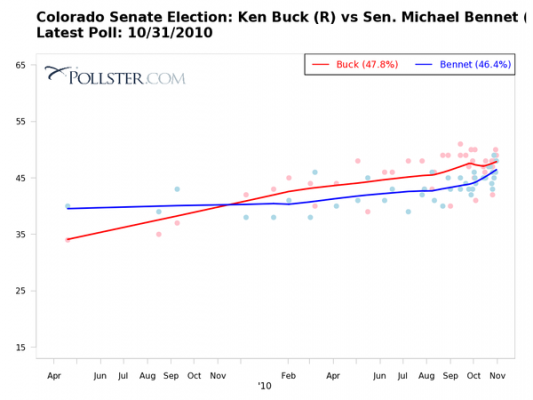

That’s actually not impossible. Indeed, for a great example of this, we need look no further than the last U.S. Senate race in Colorado, the 2010 contest between appointed Senator Michael Bennet (D) and Ken Buck (R). Note the polling estimates for that race:Buck was ahead of Bennet by several points through nearly all of 2010. Indeed, the final polling aggregate suggested that Buck would beat Bennet by more than a point. Nate Silver estimated a 65 percent chance that Buck would unseat Bennet.

In the end, however, Bennet pulled out the win, claiming 50.9 percent of the two party vote. How did Bennet manage to beat Buck by two points when the polls had him trailing by a point or two up until election day? And is there a message of hope in there for Mark Udall?

What’s important to remember here is that polling, while generally quite reliable, has a few blind spots. Any reported poll includes some sort of estimate by the polling organization of just which respondents will actually show up on Election Day. In a mid-term election, when less than half of eligible voters may show up, that can be tricky to determine. In 2010, the polls systematically underestimated Democratic voter turnout in Colorado and several other states.

Few mainstream pollsters were conducting their surveys in Spanish (which costs extra) and thus missed those people who were uncomfortable answering a poll in English but were nonetheless likely to vote Democratic.

One reason this happened likely has to do with turnout by Latino voters, who showed up in higher numbers than expected and voted strongly Democratic. Few mainstream pollsters were conducting their surveys in Spanish (which costs extra) and thus missed those people who were uncomfortable answering a poll in English but were nonetheless likely to vote Democratic. Polls similarly underestimated support for Western state Democratic Senators like Harry Reid (Nevada) and Barbara Boxer (California) that year for this same reason. (Yes, as bad as 2010 was for Democrats, it could have been worse.)

Another reason may have something to do with the Democratic ground game in Colorado. Bennet famously built on the successful Obama 2008 strategy of establishing field offices all over the state, which deployed volunteers into nearby neighborhoods with address lists and scripts to encourage turnout. This may have helped to modestly increase turnout right near Election Day, activating some of the same people who might have reported to pollsters just a few days earlier that they didn’t plan to vote.

While these trends help to explain how Bennet pulled off an upset in 2010, they’re pretty narrow reeds for Udall or other Democrats to cling to today. For one thing, pollsters tend not to repeat mistakes—they learn from previous elections and try to get more reliable estimates the next time around. For another, the Democrats (in Colorado, anyway) might not have the same ground game dominance they’ve enjoyed in recent elections.

Finally, we should remember that even while polls may occasionally miss the mark, they’re just as likely to overstate Democratic support as to understate it. Udall might be doing somewhat better than current polls suggest, or he might be doing worse. We’ll have the answer on that in just about a week.